The MVP Testing Framework: 7 Tests Before You Build Anything

Here’s a number that should terrify you: 42% of startups fail because there’s no market need.

Not because of bad code. Not because they ran out of money. Not because of competition. They built something nobody wanted. And in almost every case, they could have figured that out before writing a single line of code.

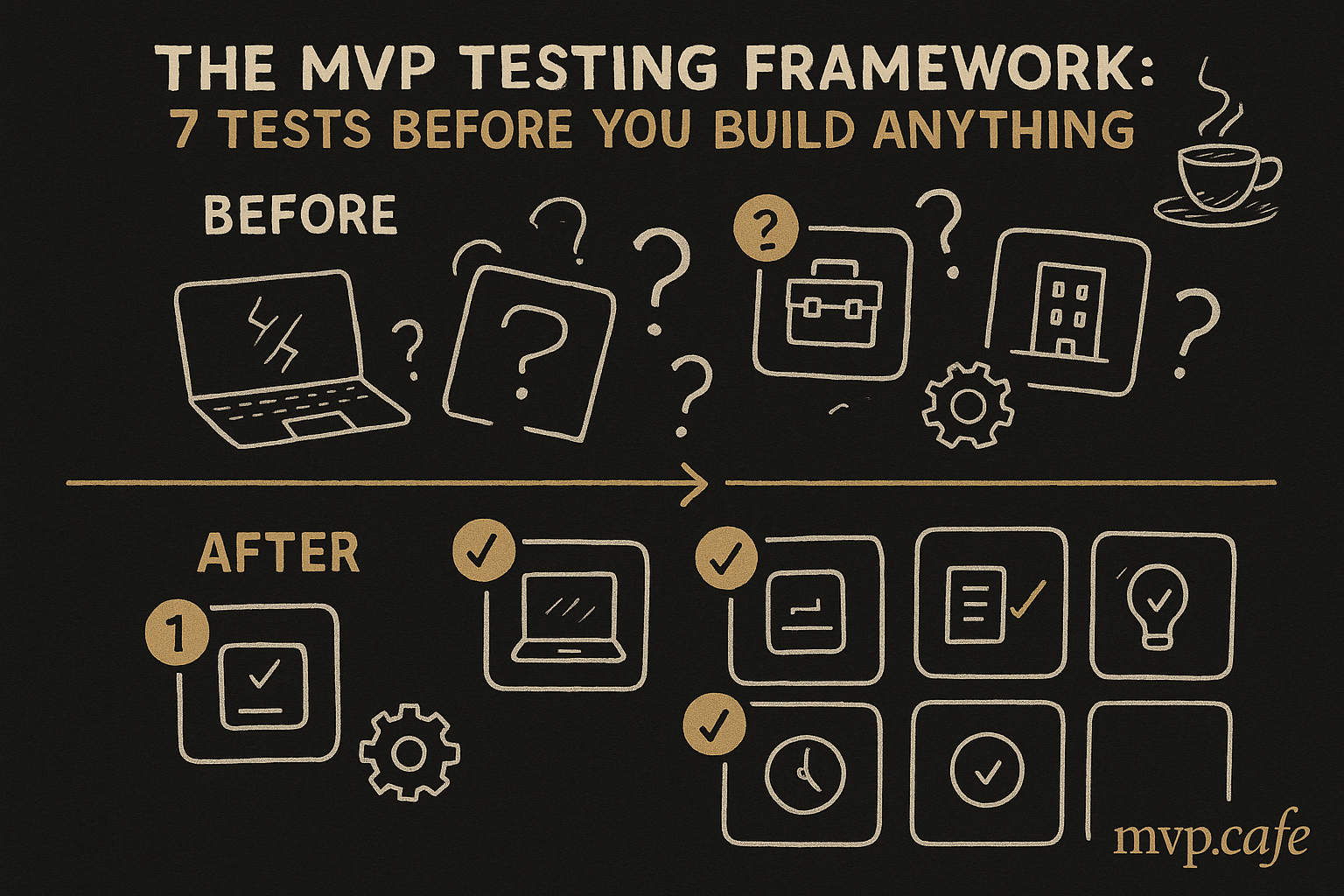

This testing framework is the antidote. Seven tests. Each one takes 1-3 days. Total cost: under $200 in most cases. If your idea survives all seven, build with confidence. If it fails at test two, you just saved yourself months and thousands of dollars.

Why Most Founders Skip Validation

Let’s be honest about why founders skip testing:

- Excitement bias — “I’m so sure this will work that testing feels like a waste of time”

- Builder identity — “I’m a builder, not a researcher. I’ll build and they’ll come”

- Competitor fear — “If I don’t build fast, someone else will steal my idea”

- Validation theater — “I asked 5 friends and they said it’s a great idea” (they lied)

None of these survive contact with reality. The founders who consistently win are the ones who test ugly hypotheses before building pretty products.

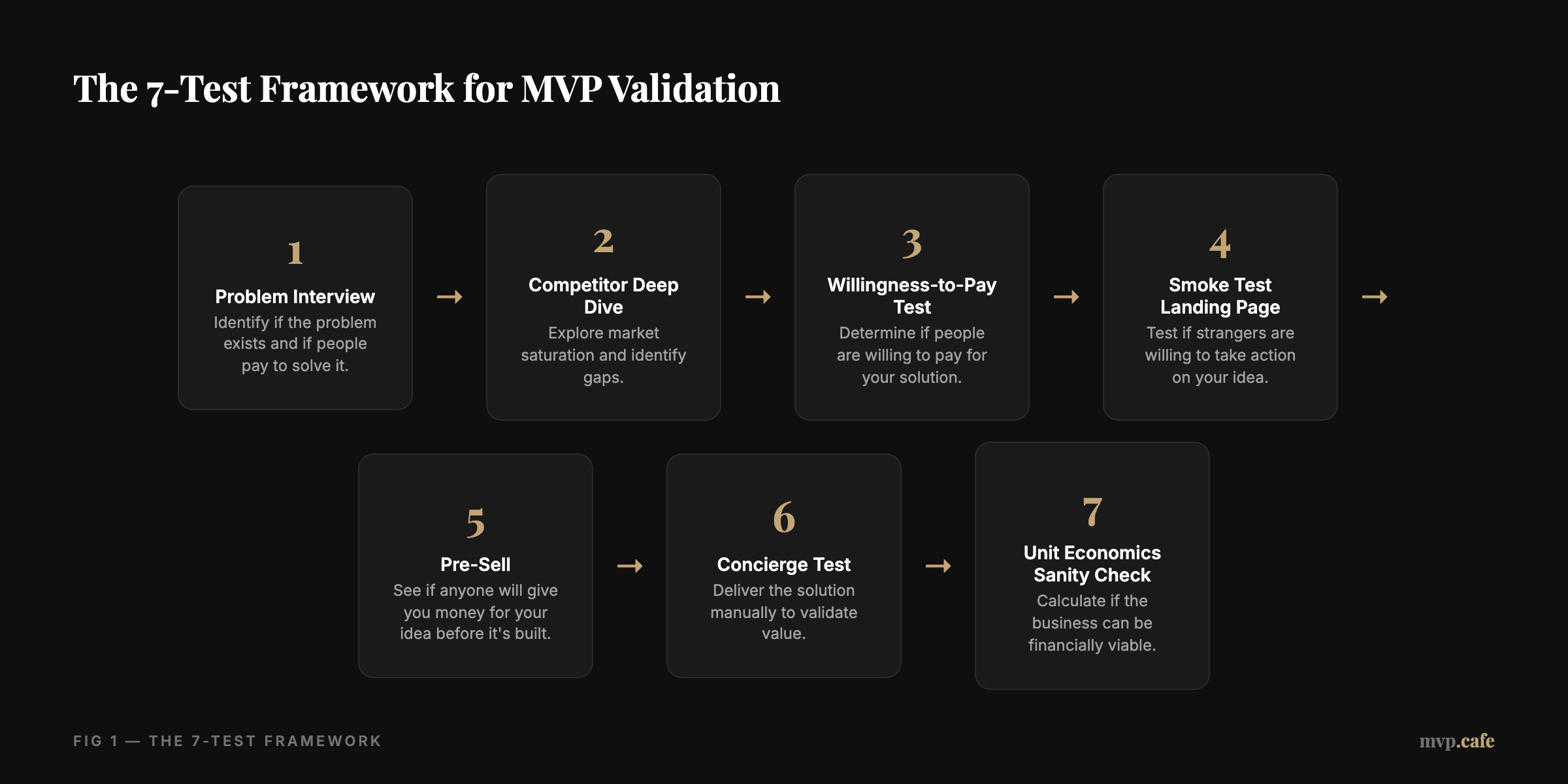

The 7-Test Framework

Test 1: The Problem Interview (Days 1-2)

What you’re testing: Does this problem actually exist for real people who’d pay to solve it?

How to run it:

- Find 10 people in your target audience (not friends, not family)

- Have a 15-minute conversation with each

- Never mention your solution. Only discuss the problem

- Ask these exact questions:

- “When was the last time you dealt with [problem]?”

- “What did you do about it?”

- “What was the most frustrating part?”

- “Have you tried any solutions? What did you like/hate?”

- “How much time/money do you spend dealing with this?”

Passing criteria:

- 7+ out of 10 describe the problem unprompted with emotional language (“it drives me crazy,” “I waste hours on this”)

- At least 3 have actively searched for solutions

- At least 2 have spent money trying to solve it

Failing criteria:

- People say “yeah, that’s annoying I guess” (lukewarm = no market)

- They describe the problem differently than you imagined

- Nobody has tried to solve it — it might not hurt enough to pay for

Common mistake: Leading questions. “Don’t you hate when X happens?” will always get a yes. Ask open-ended questions and let them tell you.

Test 2: The Competitor Deep Dive (Day 2-3)

What you’re testing: Is the market already saturated, or is there a real gap?

How to run it:

- Search Google for every way someone might describe your solution

- Search Product Hunt, G2, Capterra, Reddit for alternatives

- Sign up for every competitor’s free tier

- Map them on a 2x2 matrix: Price (low/high) × Feature depth (simple/complex)

What to document for each competitor:

- Pricing model

- Primary audience

- Key features

- What their reviews complain about (this is GOLD)

- What they’re missing

Passing criteria:

- There are competitors (proves the market exists) BUT there’s a clear gap: underserved segment, pricing opportunity, or feature miss

- Competitor reviews consistently complain about the same thing — and that’s what you’d solve

Failing criteria:

- No competitors at all (market might not exist)

- 10+ well-funded competitors covering every angle (red ocean)

- The gap you’d fill is too small to build a business around

Common mistake: “We have no competition!” Almost always means you haven’t looked hard enough, or the market doesn’t exist.

Test 3: The Willingness-to-Pay Test (Day 3)

What you’re testing: Will people actually open their wallets?

How to run it:

- Go back to 5 of your Problem Interview participants

- Describe your solution in one sentence (keep it simple)

- Ask: “If this existed today, what would you pay for it?”

- Then use the Van Westendorp method:

- “At what price would this be so cheap you’d doubt its quality?”

- “At what price would this be a bargain?”

- “At what price would this start to feel expensive?”

- “At what price would this be too expensive to consider?”

Passing criteria:

- 3+ people name a specific price (not “I dunno, maybe free”)

- The “bargain” price leaves you room for a viable business

- At least 1 person says “I’d buy that right now”

Failing criteria:

- Everyone says “I’d use it if it were free” (no willingness to pay)

- Price points are so low the unit economics don’t work

- “That’s cool but my company would never approve the budget”

Common mistake: Asking friends. They’ll say they’d pay to be supportive. Ask strangers or weak acquaintances who have no reason to lie.

Test 4: The Smoke Test Landing Page (Days 3-4)

What you’re testing: Do strangers care enough to take action?

How to run it:

- Build a one-page landing page (Carrd, Framer, or even Google Sites — it doesn’t matter)

- Describe the problem, your solution, and the key benefit

- Add ONE call-to-action: “Join the waitlist” or “Get early access”

- Drive traffic: $50-100 on Google Ads targeting your keywords, or post in 3-5 relevant communities

Metrics to track:

- Visitor → email signup conversion rate

- Where signups came from

- Any replies to your confirmation email

Passing criteria:

- 5%+ email signup rate from cold traffic

- 10%+ signup rate from community posts

- People reply to the confirmation email asking when it launches

Failing criteria:

- Under 2% signup rate despite targeted traffic

- High bounce rate (people don’t even read the page)

- Nobody replies to follow-ups

Common mistake: Spending a week making the landing page beautiful. Ugly + clear beats beautiful + confusing. Every time.

Test 5: The Pre-Sell (Days 4-5)

What you’re testing: Will someone give you actual money?

How to run it:

- Create a Stripe payment link or Gumroad page

- Offer a “Founding Member” deal: 50% off, limited to 10 spots

- Email your waitlist + post in communities

- Be transparent: “We’re building this now. Founding members get [early access + input on features + lifetime discount]”

Passing criteria:

- 1+ actual purchase (even $1 proves willingness to pay)

- 3+ people click the payment link (intent matters)

- Conversations that start with “When will this be ready?”

Failing criteria:

- Zero clicks on the payment link

- People say “I’ll wait until it’s built” (translation: I’ll never buy)

- Refund requests or “I thought this was free”

Common mistake: Feeling sleazy about selling something that doesn’t exist yet. This is standard practice. Kickstarter is literally this. Just be honest about the timeline.

Test 6: The Concierge Test (Days 5-7)

What you’re testing: Can you deliver the value manually before building technology?

How to run it:

- Take your first 3-5 customers (from pre-sell or waitlist volunteers)

- Deliver your solution by hand: spreadsheets, email, phone calls, manual work

- Track exactly how much time each “unit” takes

- Get feedback after each delivery

What to learn:

- Does the solution actually solve the problem?

- What do customers value most? (Often different from what you assumed)

- What’s the real cost of delivery? (Time × your hourly rate)

- What would they want added or changed?

Passing criteria:

- Customers get real value (they tell you, unprompted)

- You can see a clear path to automating the manual work

- Retention: they’d want to use it again

- NPS of 8+ from at least 60% of testers

Failing criteria:

- Customers are underwhelmed (“That’s it?”)

- The manual process reveals the product is far more complex than you thought

- Nobody would pay the price that makes the economics work

Common mistake: Over-delivering during concierge (spending 5 hours per customer on what you’d automate to 5 minutes). Deliver what the product would deliver — nothing more.

Test 7: The Unit Economics Sanity Check (Day 7)

What you’re testing: Can this be a real business, not just a cool product?

Calculate these numbers:

- Customer Acquisition Cost (CAC): What did you spend to get each test customer? (Ad spend + time)

- Revenue per customer: What they’d pay per month or per purchase

- Delivery cost per customer: Based on your concierge test, what does it cost to serve one customer?

- Gross margin: Revenue minus delivery cost

- LTV estimate: Revenue × estimated months of retention

- LTV:CAC ratio: Must be 3:1 or better for a viable business

Passing criteria:

- LTV:CAC ratio > 3:1

- Gross margin > 60% (for software/SaaS)

- You can see a path to reducing CAC through organic channels

- Unit economics improve at scale (delivery cost goes down per customer)

Failing criteria:

- LTV:CAC ratio < 2:1 with no clear path to improve

- Gross margin < 40% (you’ll burn cash forever)

- Customer acquisition requires paid ads with no organic path

- The math only works at massive scale you can’t reach bootstrapped

The Scoring System

After running all 7 tests, score your idea:

| Tests Passed | Verdict |

|---|---|

| 7/7 | 🟢 Build it. Strong signal across every dimension |

| 5-6/7 | 🟡 Build cautiously. Investigate the failed tests. Can you pivot to fix them? |

| 3-4/7 | 🟠 Pause and rethink. The core might be there but the market isn’t ready or the model doesn’t work |

| 0-2/7 | 🔴 Kill it. Move to your next idea. This saved you $10K+ and months of your life |

Real Example: How We’d Test a “AI Study Guide Generator”

Idea: Upload your lecture notes, get a personalized study guide with practice questions.

Test 1 (Problem Interview): Talk to 10 college students. 9/10 describe studying as painful and time-consuming. 6 have tried Quizlet, ChatGPT. The problem is real. ✅

Test 2 (Competitor Deep Dive): Quizlet (flashcards, not study guides), ChatGPT (generic, not personalized to their notes), Notion AI (requires manual setup). Gap exists: automated, personalized study guides from uploaded notes. ✅

Test 3 (Willingness to Pay): Students say $5-10/month. “Bargain” at $7. “Too expensive” at $20. Economics are tight but workable at scale. ✅

Test 4 (Smoke Test): Landing page gets 8% signup rate from Reddit r/college posts. 12% from targeted Instagram ads. ✅

Test 5 (Pre-Sell): “Founding member” at $4/month. 7 purchases from 200 signups. 3.5% conversion. ✅

Test 6 (Concierge): Manually create study guides using ChatGPT + formatting. Takes 45 min per guide. Students love the output but want faster turnaround. Can automate with API. ✅

Test 7 (Unit Economics): CAC $3 (Reddit organic), Revenue $7/mo, Delivery cost $0.50/guide (API calls), Gross margin 93%, Estimated LTV $42 (6-month retention). LTV:CAC = 14:1. ✅

Score: 7/7. Build this.

What If You Don’t Have 7 Days?

Here’s the compressed 48-hour version:

Day 1:

- Morning: 5 problem interviews (DMs, phone calls, coffee chats)

- Afternoon: Competitor deep dive + willingness-to-pay conversations

- Evening: Build smoke test landing page

Day 2:

- Morning: Launch landing page, run $50 in ads, post in 3 communities

- Afternoon: Send pre-sell offer to anyone who bites

- Evening: Do 1-2 concierge deliveries if possible, run unit economics

You’ll miss nuance, but you’ll catch the fatal flaws.

Take Your Build Score

Not sure where to start? The Build Score assessment tells you exactly where your idea stands — and which tests to prioritize.

Take the free Build Score assessment →

If you score high but want expert help running these tests, book a Strategy Sprint. In 90 minutes, we’ll map your validation plan and identify the tests that matter most for your specific idea.

Book a Strategy Sprint ($197) →

The best MVP you can build is the one you’ve already proven people want. Test first. Build second. Regret never.